25.03.2026 | Blog Enterprise Search with Chatbot and RAG explained

TL;DR: Key takeaways at a glance

✓ In enterprise environments, RAG only works with clean retrieval: relevant content is found, permissions are checked, and the right passages are passed to the language model as context.

✓ In practice, success depends above all on data connectivity, access control, relevance ranking, and governance—not just on a good model.

What is Enterprise Search?

Enterprise Search aims to make information across data silos and application boundaries findable in companies and public-sector organizations. Knowledge from DMS, intranets, wikis, ticketing systems, CRM systems, or file shares becomes centrally accessible without requiring users to search each tool individually. Typical characteristics include:

- Connectors to internal systems

Content from relevant sources is connected and continuously updated, including versions and permissions. - Indexing of content and metadata

In addition to full text, metadata such as document type, department, or validity is captured so that results can be better categorized and filtered. - Relevance ranking

Text content, metadata, popularity, freshness, and usage context help rank the most relevant content at the top. - Access permissions

Read and access rights are inherited from the source systems and automatically integrated into search, for example through role-based access or ACL checks. Users only see what they are authorized to access. - Filters, synonyms, and domain-specific terminology

Filters make it easier to narrow results, while synonym and abbreviation logic helps with company-specific language.

How does an AI assistant complement Enterprise Search?

An AI assistant, often also described as an enterprise chatbot, complements Enterprise Search by changing the interaction model: instead of delivering result lists with highlighted matches, it can prepare information in dialogue, summarize it, and place it in the user’s context.

- Instead of “reading and piecing together search results”: answers in dialogue - The AI assistant summarizes relevant content and explains it clearly.

- Instead of a pure result list: summary + context + next steps - Users get action-oriented answers more quickly, for example in the form of a short instruction or checklist.

- Instead of rigid query formulation: follow-up questions, clarification, and iteration - The assistant resolves ambiguities and guides the user step by step to the right answer.

Important: classic Enterprise Search remains ideal when a specific document needs to be found or original sources need to be explored. A chatbot is often an additional interface that condenses search results and prepares them in a practical way.

Chatbots, assistants, agents: terms and distinctions

These terms are often used inconsistently. For orientation, this distinction is usually sufficient:

- Enterprise chatbot: answers questions in dialogue based on connected knowledge sources

- AI assistant: additionally supports concrete tasks and work contexts, for example through summaries, follow-up questions, or document-based interaction

- Agent: can independently carry out multi-step actions using rules and tools

What does it mean to “chat with your own data” in an enterprise context? In practice, this means that the AI assistant uses internal sources as context, such as documents, wiki content, tickets, or policies. Responses must take governance into account and therefore, for example, be based on approved, traceable, and access-authorized sources.

How this differs from simple FAQ bots: FAQ bots often provide predefined answers according to a simple question → answer pattern. Enterprise chatbots used in knowledge contexts, by contrast, require retrieval, access control, monitoring, and quality management.

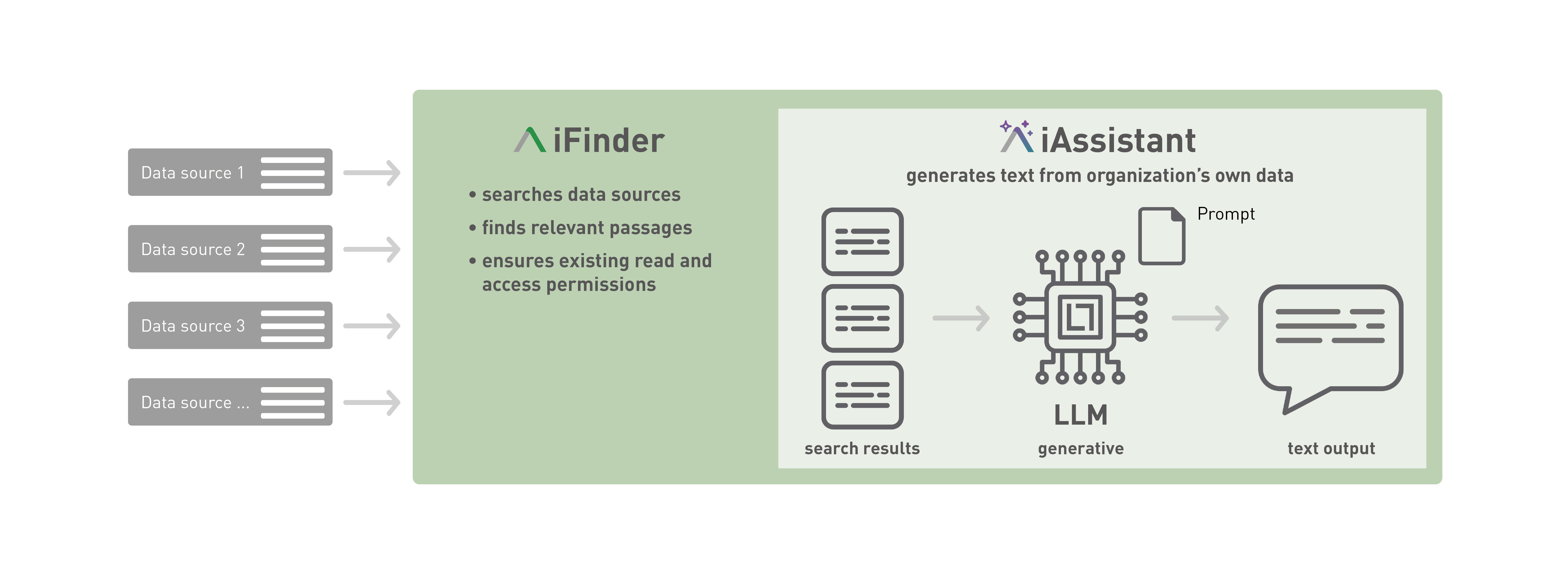

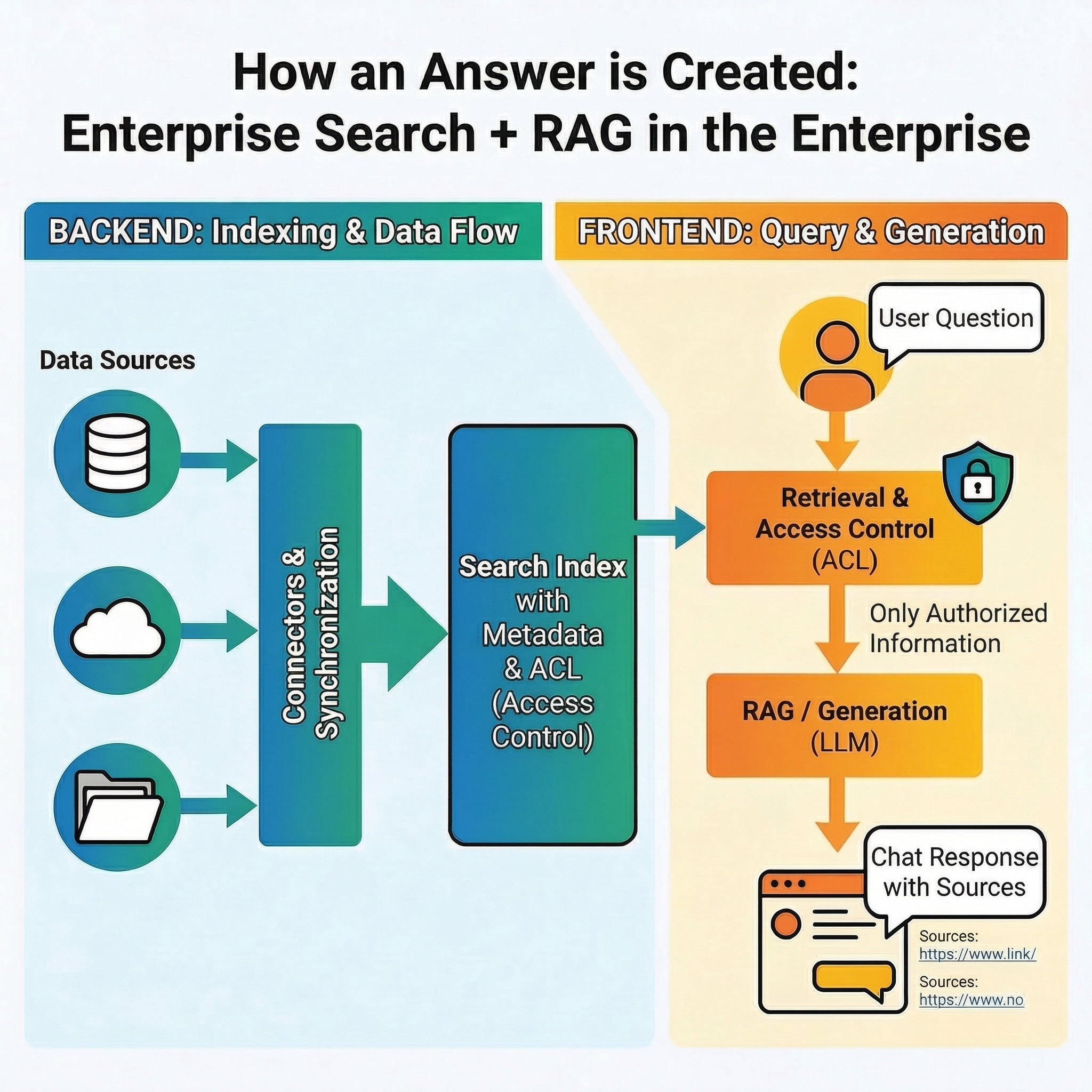

How does RAG work in an enterprise context?

RAG stands for Retrieval-Augmented Generation. It combines the retrieval of relevant content with generative answer creation. In combination with Enterprise Search, the search infrastructure ensures that relevant and authorized content is found, while RAG uses exactly this content to formulate a reliable answer. From a technical perspective, RAG therefore often forms the foundation of an enterprise chatbot. Rather than answering questions based on general model knowledge alone, it relies on relevant internal content as context. In enterprise environments, RAG is typically built on top of Enterprise Search, since Enterprise Search provides capabilities such as connectors, relevance ranking, metadata, and role- and permission-based access control, enabling high retrieval quality even across large and heterogeneous data sets.

In simplified form, the process works as follows:

- A user asks a question.

- The system searches for suitable content in the connected sources.

- Permissions and relevance are checked.

- Relevant passages are passed to the language model as context.

- The model generates an answer, ideally supplemented with source references.

Typical use cases for Enterprise Search with an AI assistant

HR and employee services - Employees ask about onboarding, sick leave rules, benefits, expenses, or policies for mobile work. Instead of manually searching through multiple policies, the AI assistant provides an understandable answer and points to the valid source.

IT service and helpdesk - Users look for instructions, known issues, access paths, or standard processes. The AI assistant uses knowledge articles, runbooks, and tickets and suggests concrete next steps.

Sales and pre-sales - Sales teams need quick access to product information, case studies, proposal building blocks, or answers to frequently asked customer questions. An AI assistant makes this content easier to find and directly usable.

Customer service and contact center - Service teams can prepare answers from knowledge bases, manuals, and process documents more quickly. This speeds up service delivery and helps ensure more consistent responses in customer interactions. In some scenarios, customers may also receive answers directly through self-service.

Project and knowledge management - A lot of time is often lost searching for the latest presentation or decision document and researching the current state of knowledge. Here, an AI assistant can do more than search; it can also summarize content directly.

Conclusion

Enterprise Search and chatbots complement each other well. Enterprise Search provides the foundation for making knowledge from many systems findable. A chatbot or AI assistant builds on that foundation and makes this knowledge usable in dialogue. Practical success depends not only on good models, but above all on clean data integration, access control, relevance, governance, and quality management.

Organizations planning a GenAI initiative can test IntraFind’s Enterprise Search with generative AI free of charge.

FAQ

Enterprise Search finds content such as documents, pages, or records and returns result lists. An enterprise chatbot uses this content to formulate, summarize, or explain answers in dialogue, ideally with source references and with permissions considered.

To answer questions, the chatbot accesses organization-specific content, such as policies, manuals, tickets, or wikis, and uses it as context for the answer instead of generating only from “model knowledge.”

RAG (Retrieval-Augmented Generation) combines retrieval with text generation. This allows answers to be more strongly grounded in current internal sources. The quality of the answer depends heavily on the quality of the retrieval and the underlying sources.

In enterprise environments, permission should be enforced during retrieval, for example through ACLs or roles, so that the enterprise chatbot only uses content as context that the user is allowed to see. Permissions must be considered not only in search, but also when providing context to the language model.

Through strong retrieval quality, including relevance, metadata, and hybrid search, clear answer rules, such as “if there is no source, do not make a factual claim,” source references, testing with benchmark questions, and ongoing monitoring.

Related articles

Use knowledge with enterprise search and generative AI

The author

Sonja Bellaire